Google Firebase with a side of AWS Amplify

Dual hosting a Hugo static site with two of the largest CDNs in the world.

TL; DR

In this post, I’ll discuss a rabbit hole that I went down with only a thin excuse as the reason. And here it is, a Reddit reply to a shared post from this site:

I can’t view on my desktop because your site is blocked on my network. Sorry

The rabbit hole in question? Dual stacking this site across Google and Amazon infrastructure.

Introducing AWS Amplify

I first read about Amplify in this blog post. It discussed the launch of Amplify Console as an evolution of the framework that proceeded it into a service offering. Described as:

Scalable hosting for static web apps with serverless backends

What made it attractive was the list of Firebase-like features:

- Automatic CDN via CloudFront

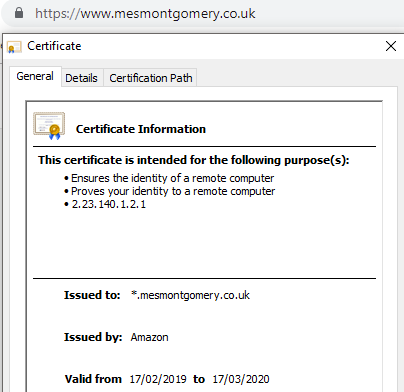

- Automatic free HTTPS

What made it compelling was this:

- Hugo native support (build from source files)

- Continous automated deployment of releases in reaction to repository updates

Hugo on AWS Amplify

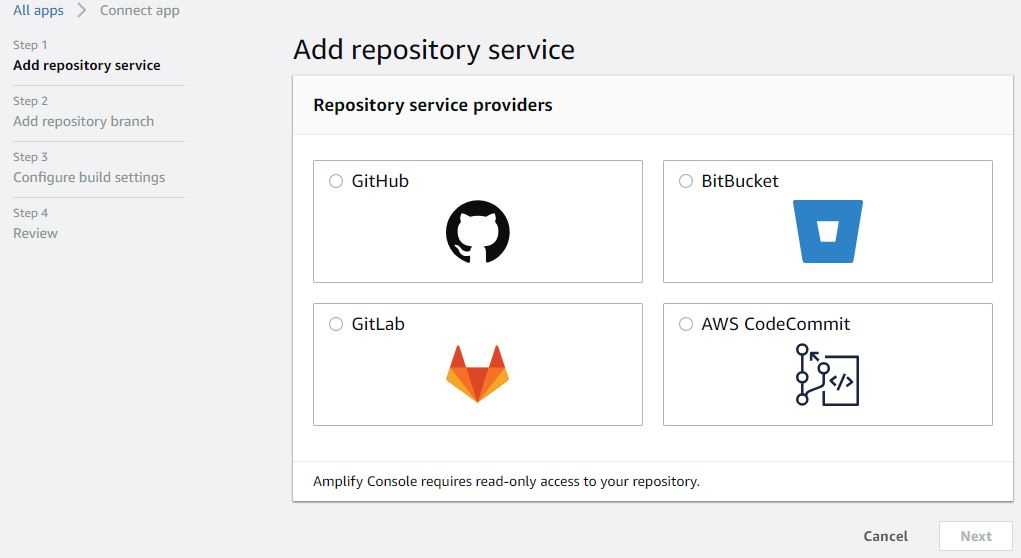

I had to consider how I wanted to consume Amplify. After some thought, I settled on a parallel deployment with a custom domain of www.mesmontgomery.co.uk. It would allow me to compare with Firebase without load balancing in play. Getting started was as simple as:

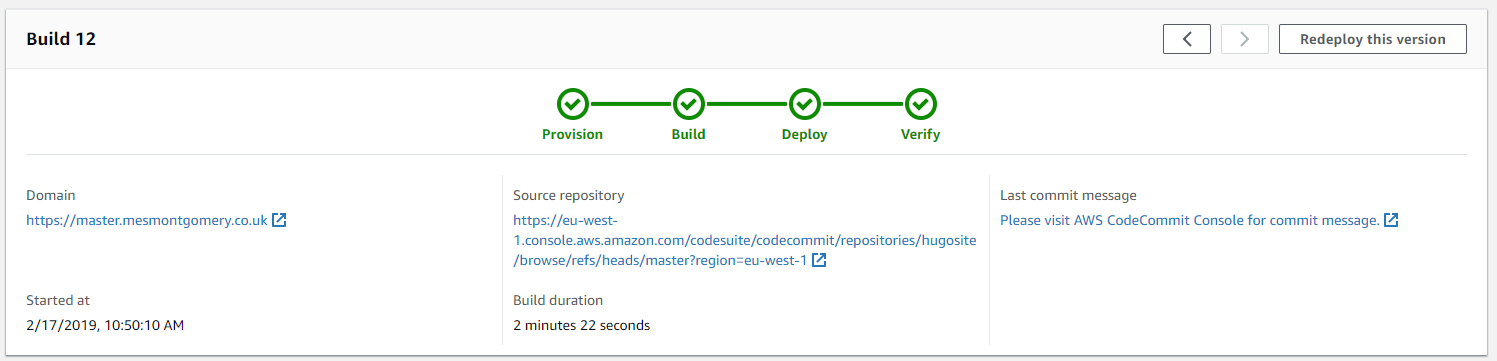

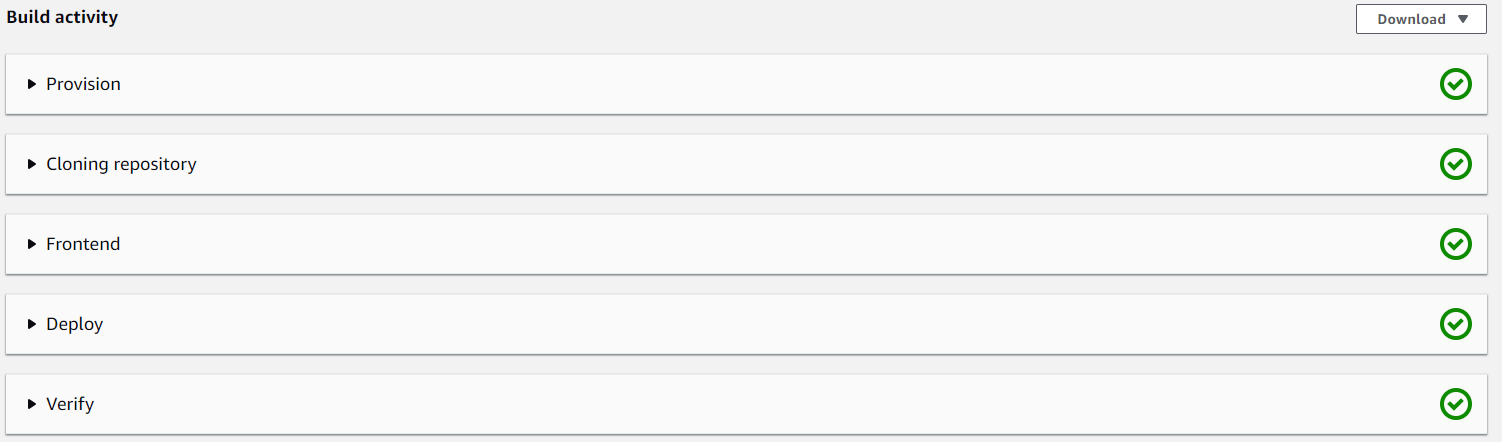

I created a CodeCommit repository and populated the master branch with the current build files. Amplify detected the Hugo framework automatically upon the branch connection. Amplify applied default build settings, and a build started. The application was available with an Amplify URL in the form https://master.generatedID.amplifyapp.com/. All in about 2 minutes.

No documentation was required to get to this point, and my jaw was on the floor.

Establishing a new workflow

Bringing Amplify into this project forced a certain amount of structure by requiring Git. I follow these steps to publish to both stacks:

- Build locally and preview live content on Hugo Server.

- Populate the \Public folder with static files via a Hugo build. Deploy to Firebase.

- Check Firebase matches the local content.

- Merge into the master branch and push to CodeCommit. Wait ~2 minutes.

- Compare Amplify new content vs the Firebase content.

It would be interesting to automate the Amplify build triggering a Firebase deploy in future. I’ll wait until I’m entirely comfortable with the above.

I’ve linked a couple of articles at the bottom which I find useful as a reference for Git branching.

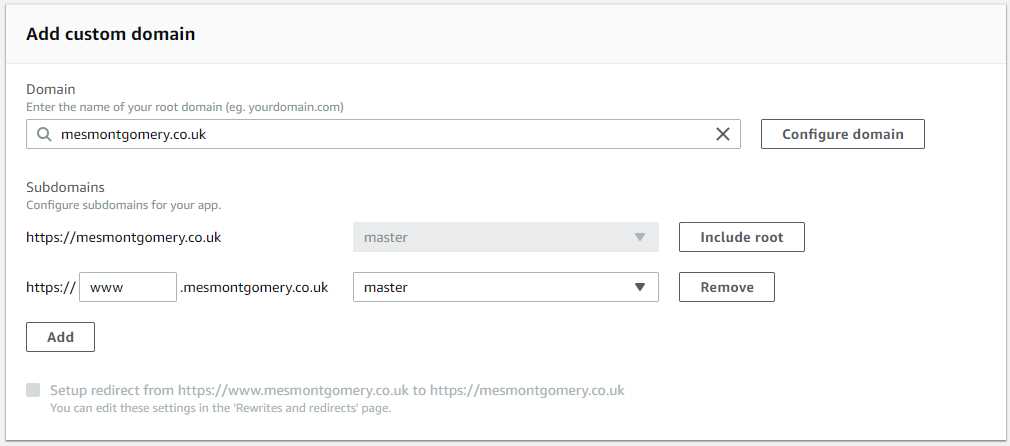

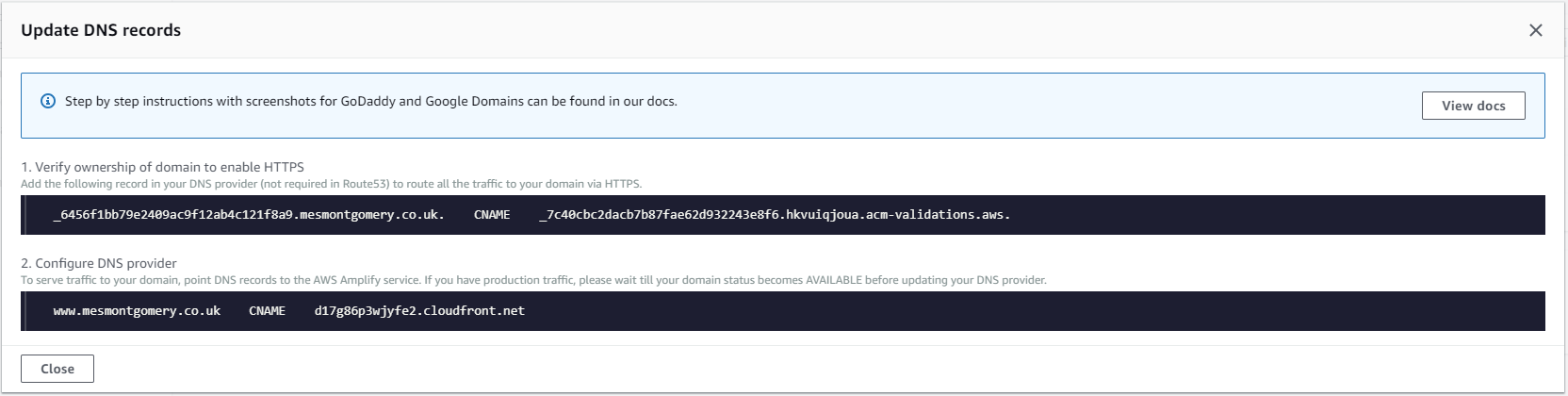

Connecting a domain

You’ll want to connect your domain name to the application. Amazon promotes its Route53 one-click integration though you may use externally supplied DNS by following their guidelines. If you only want one record as I did, make sure to omit the root of the domain from the custom domain request.

Debugging

There has been only one issue requiring me to troubleshoot the service, a functional difference when comparing the two deployments. Syntax highlighting was not working in the Amplify hosted build.

Initially, I thought it was a build difference as I never sought to learn the Hugo version in play.

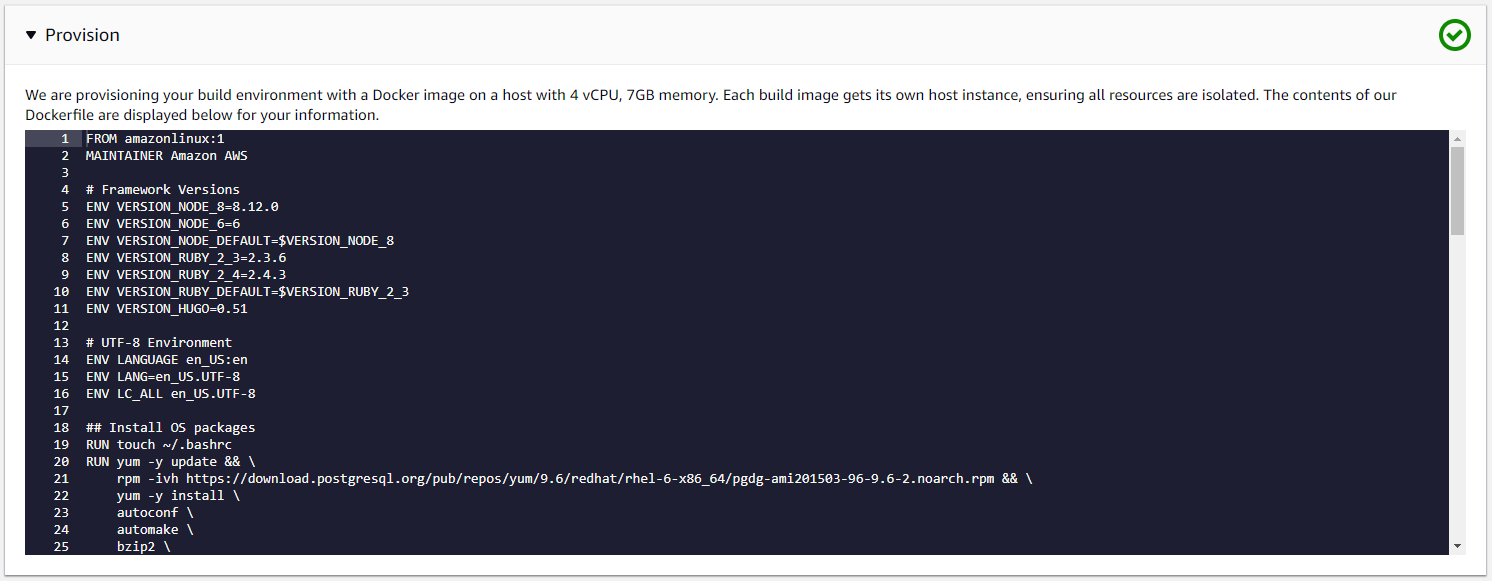

We can see this in the provisioning log:

ENV VERSION_HUGO = 0.51.

I was using a newer release of Hugo locally however rebuilding with the same version as Amplify did not reproduce the issue.

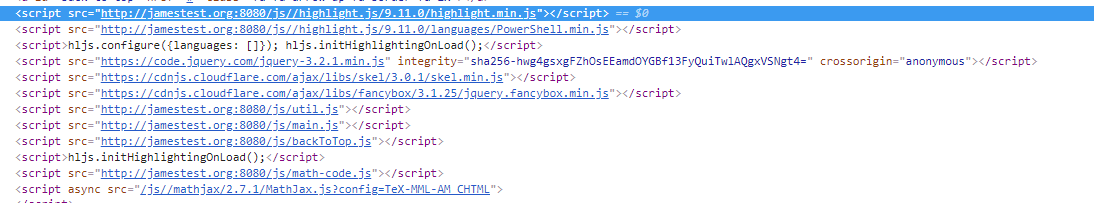

I surmised that double slashes were causing an issue after a source code review. Note the path for highlight.min.js:

\ in config.toml) leading to this was changed resolving the issue.

The Hugo version is something I’ve not found how to modify. It seems like it should be possible as its an environment variable in the build process.

Another way to host Hugo with Amplify

It occurred to me that if the Hugo version were to cause an issue, then I would require another approach.

I created another repository based on the static files Hugo produces in the \Public folder. When you connect the Amplify Console to this, it defaults to a standard Web framework. It becomes a direct replacement for hosting static content as you would on Firebase.

I believe this is a more straightforward solution with greater control, and I will likely favour it.

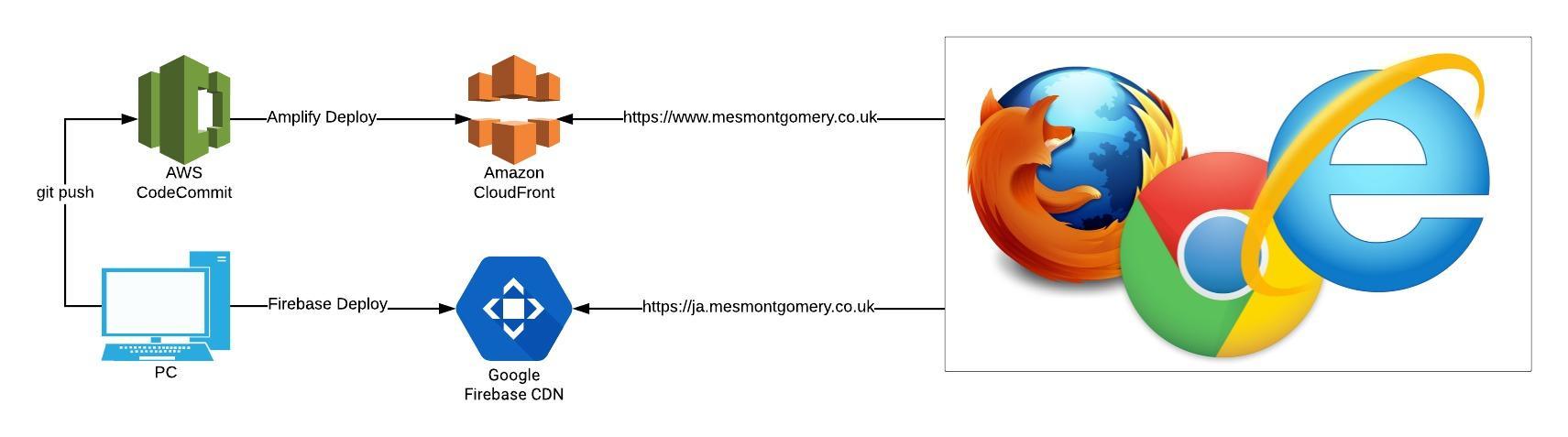

Visualising the solution

The result is two discrete deployments of the website hosted by different providers with global content distribution networks. In this active/passive model, I would need to intervene if one service was unavailable.

In conclusion

There was an issue with the site which had nothing to do with a network block. One of the CSS files was hosted externally and not available at times. I had realised that before completing this experiment but saw value in finishing. The complexity in building across multiple public cloud platforms for even a simple hobby site such as this can be telling.

I’ll leave you with this classic video (The Website is Down #1: Sales Guy vs Web Dude):

Acknowledgements

The Pro Git book I found wonderfully useful. I’ve also had this branching article bookmarked for some time and read it multiple times. One Myles Gray put me onto it.

Share this post